|

How many keys and values? How large are keys and values? For example, if the keys are longs and values are strings 40 characters long on average, the absolute minimum for 2 billion key-value pairs is (40 + 8) * 2E9 - approximately 100 GB.

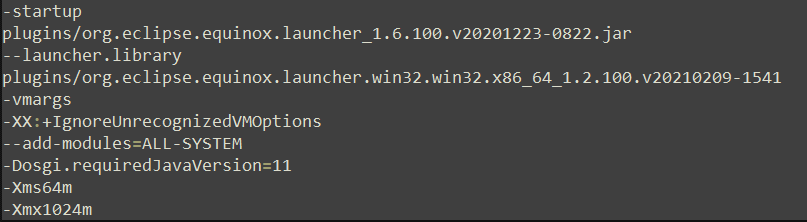

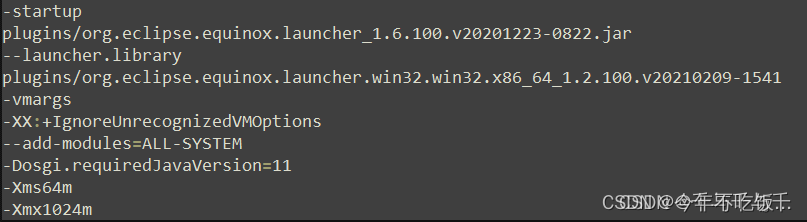

You need to do a rough estimate of of memory needed for your map. Use Profiler and Heap dump Analyzer tool to understand Java Heap space and how much memory is allocated to each object. Java Garbage collector is responsible for reclaiming memory from dead object and returning to Java Heap space.ĭon’t panic when you get, sometimes it’s just matter of increasing heap size but if it’s recurrent then look for memory leak in Java. Java Heap space is different than Stack which is used to store call hierarchy and local variables. You can use command "jmap" to take Heap dump in Java and "jhat" to analyze that heap dump. You can use either JConsole or Runtime.maxMemory(), Runtime.totalMemory(), eeMemory() to query about Heap size programmatic in Java. don't forget to add word "M" or "G" after specifying size to indicate Mega or Gig.įor example you can set java heap size to 258MB by executing following command java -Xmx256m javaClassName (your program class name). You can increase or change size of Java Heap space by using JVM command line option -Xms, -Xmx and -Xmn. Permanent generation is garbage collected during full gc in hotspot JVM. Java Heap space is divided into three regions or generation for sake of garbage collection called New Generation, Old or tenured Generation or Perm Space. Whenever we create objects they are created inside Heap in Java. In JRuby especially we generate a lot of adapter bytecode, which usually demands more perm gen space.Java Heap Memory is part of memory allocated to JVM by Operating System. Because this generation is rarely or never collected, you may need to increase its size (or turn on perm-gen sweeping with a couple other flags). The list of perm-gen hosted data is a little fuzzy, but it generally contains things like class metadata, bytecode, interned strings, and so on (and this certainly varies across Hotspot versions). Hotspot is unusual in that several types of data get stored in the "permanent generation", a separate area of the heap that is only rarely (or never) garbage-collected. XX:MaxPermSize=#M sets the maximum "permanent generation" size.

You can also get a minor startup perf boost by setting minimum higher, since it doesn't have to grow the heap right away. If you don't include it, you're specifying bytes. Use these flags like -Xmx512M, where the M stands for MB. So once you figure out the max memory your app needs, you cap it to keep rogue code from impacting other apps. Touted as a feature, Hotspot puts a cap on heap size to prevent it from blowing out your system. Xms and -Xmx set the minimum and maximum sizes for the heap. What do I do if I have to process even more than 500,000 records? I know this error comes because of VM memory, but don't know how to increase it in Eclipse? Here ResultSet have processed around 500000 records, but it will give me error like: Exception in thread "main" : Java heap spaceĪt (HashMap.java:508)Īt (LinkedHashMap.java:406)Īt (HashMap.java:431)Īt (CompareRecord.java:91)

("Duplilcate List Sisze => "+dupList.size()+" "+outerkey) Iterator innerit = adddressMap.entrySet().iterator() String outerresult = p.split(outerValue) String outerValue = (String)pairs.getValue() Integer outerkey = (Integer)pairs.getKey() Iterator it = adddressMap.entrySet().iterator() ("address Map size => "+adddressMap.size()) ("result set is not null ") ĪdddressMap.put(res.getInt(1),res.getString(2)) Res = stmt.executeQuery("select rowindx,ADDRLINE1 from dedupinitial order by rowindx")

CompareRecord record = new CompareRecord() Ĭonnection conn = new CompareRecord().getConection("eliteddaprd","eliteddaprd","192.168.14.104","1521")

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed